OpenAI CEO Sam Altman testified before Congress in Washington, D.C., this week about regulating artificial intelligence as well as his personal fears over the tech and what “scary” AI systems means to him.

Fox News Digital asked OpenAI’s wildly popular chatbot, ChatGPT, to also weigh in on examples of “scary” artificial intelligence systems, and it reported six hypothetical instances of how AI could become weaponized or have potentially harmful impacts on society.

When asked by Fox News Digital on Tuesday after his testimony before a Senate Judiciary subcommittee, Altman gave examples of “scary AI” that included systems that could design “novel biological pathogens.”

“An AI that could hack into computer systems,” he continued. “I think these are all scary. These systems can become quite powerful, which is why I was happy to be here today and why I think this is so important.”

Advanced autonomous weapon system

When asked for examples of “scary AI,” ChatGPT responded, “These [autonomous weapon systems], often referred to as ‘killer robots’ or ‘lethal autonomous weapons,’ raise ethical concerns and the potential for misuse or unintended consequences.” (Costfoto / Future Publishing via Getty Images)

Following Altman’s comments on scary AI and other tech leaders sounding the alarm on misused AI, Fox News Digital asked ChatGPT on Wednesday to provide an example of “scary AI.”

“An example of ‘scary AI’ is an advanced autonomous weapon system that can independently identify and attack targets without human intervention,” the chatbot responded. “These systems, often referred to as ‘killer robots’ or ‘lethal autonomous weapons,’ raise ethical concerns and the potential for misuse or unintended consequences.”

ChatGPT said to “imagine a scenario where such an AI-powered weapon system is programmed with a set of criteria to identify and eliminate potential threats.” If such a system malfunctioned or wound up in the hands of bad actors, “it could lead to indiscriminate targeting, causing widespread destruction and loss of life.”

Deepfakes hit the public’s radar in 2017 after a Reddit user posted realistic-looking pornography of celebrities to the platform. Deepfakes have since become more widely used and convincing, and they have led to phony videos, such as comedian Jerry Seinfeld starring in the classic 1994 crime drama “Pulp Fiction” or rapper Snoop Dogg appearing in a fake infomercial promoting tarot card readings.

CRITICS SAY AI CAN THREATEN HUMANITY, BUT CHATGPT HAS ITS OWN DOOMSDAY PREDICTIONS

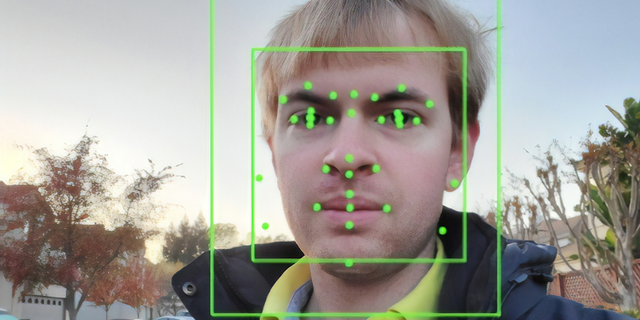

AI-powered surveillance

ChatGPT also listed “AI-powered surveillance” systems used by bad actors, such as “authoritarian regimes,” as an example of “scary AI.” (Smith Collection / Gado / Getty Images / File)

The chatbot next listed “AI-powered surveillance” systems used by bad actors, such as “authoritarian regimes,” as an example of “scary AI.”

“These bots can be used for phishing attacks, spreading propaganda or influencing public opinion through social media platforms, amplifying the potential for misinformation and societal division,” the chatbot said.

When asked to elaborate on the threats chatbots could pose, ChatGPT said that such technology has the ability to mimic human conversations and “convincingly interact with individuals.” Chatbots could then “exploit psychological vulnerabilities” of humans by analyzing their responses, potentially leading to humans divulging sensitive personal information or buying into false information from the bots, or the systems could manipulate human “emotions for malicious purposes.”

OPENAI CEO SAM ALTMAN INVITES FEDERAL REGULATION ON ARTIFICIAL INTELLIGENCE

The reports have caused some anxiety among workers, including in fields most likely affected by the technology, such as customer service representatives, but proponents of the technology say the proliferation of AI could lead to the development of new jobs and a strengthened economy.

OPENAI CEO SAM ALTMAN ADMITS HIS BIGGEST FEAR FOR AI: ‘IT CAN GO QUITE WRONG’

AI bias and discrimination

ChatGPT said AI systems can perpetuate bias and discrimination based on how it is trained, which could amplify “existing societal inequalities.”

ChatGPT can sometimes “hallucinate,” meaning it can respond to a query with an answer that sounds correct but is actually made up. (Getty Images / File)

ChatGPT highlighted in its responses about “scary AI” that though its examples shine a light on the “potential negative implications of AI, they also reflect the importance of responsible development, regulation and ethical considerations to mitigate such risks and ensure the beneficial and safe use of AI technologies.”

OPENAI CEO SAM ALTMAN FACES SENATE PANEL AS PRESSURE BUILDS TO REGULATE AI

Altman said during the Senate hearing Tuesday that his greatest fear as OpenAI develops artificial intelligence is that it causes major harmful disruptions for people.

Altman said during the hearing that he invites the opportunity to work with U.S. lawmakers on crafting regulations for AI that would help prevent unwanted outcomes with the tech.